|

3/31/2023 0 Comments Dim3 to opencl

For example, a thread block can computer C0,0 in two iterations: C0,0 = A0,0 B0,0 + A0,1 B1,0. After all the iteration is done, the thread block stores one tile of C into global memory. HIP API is less verbose than OpenCL, and C++ is familiar to CUDA developers. HIP C++ code can use templates, lamdbas, classes, etc. 1. HIP offers several benefits over OpenCL: Developers can code in C++, and mix host and device C++ code in their source files. In each iteration, one thread block loads one tile of A and one tile of B from global memory to shared memory, performs computation, and stores temporal result of C in register. This module defines the following variables: OpenCLFOUND - True if OpenCL was found OpenCLINCLUDEDIRS - include directories for OpenCL OpenCLLIBRARIES - link against this library to use OpenCL OpenCLVERSIONSTRING - Highest supported OpenCL version (eg. Given an object of type cl::Program::Sources a cl::Program, an object is created and associated with a context, then built for a particular set of devices.

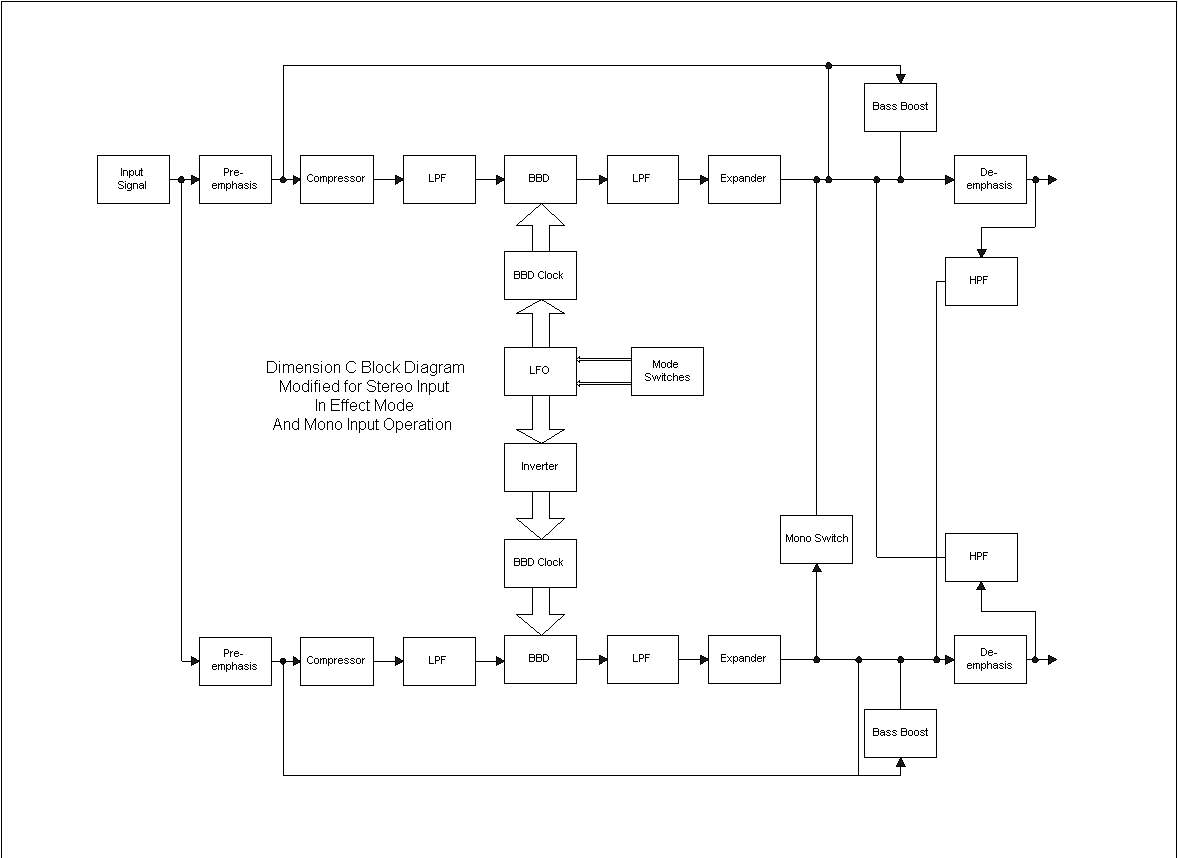

The GPU kernel computes C in multiple iterations. The first few lines of the following code simply load the OpenCL device program from disk, convert it to a string, and create a cl::Program::Sources object using the helper constructor. To compute this, four thread blocks each with 16 x The figure shows a 32 x 32 matrix divided intoįour 16 x 16 tiles. Increase the "computation-to-memory ratio", the tiled matrix Increasing "Computatin-to-Memory Ratio" by Tiling dim3 threads( 8, 8, 1) // threads per block dim3 grid( 1 + (width-1) / 8, 1 + (height-1) / 8, 1) // blocks in grid. Therefore, the naive implementation is bandwidth bounded. of threads in // the grid is thus 16 x9 144. In CUDA to cover multiple blocks, and thus incerase the range of indices for arrays we do some thing like this: dim3 dimgrid (9,1)// total 9 blocks will be launched dim3 dimBlock (16,1)// each block is having 16 threads // total no. The "computation-to-memory ratio" is approximately 1/4 (flop/byte). OpenCl equivalent of finding Consecutive indices in CUDA. The naive implementation, the amount of computation is 2 x M x N x Kįlop, while the amount of global memory access is 2 x M x N x K word. Note: These optimizations, which are tuned for NVIDIA 8800 GT GPU at matrix size of 4096 x 4096, could be sub-optimal for other GPUs and other matrix sizes. The performance of these optimization techniques are show in the figures below (clink the figure to enlarge). Most of them are generic, which can be applied to other applications. The built-in OpenCL function getglobalid(uint dimindx) is used. The matrixMul example on this page will show several techniques to optimize matrix multiplication on GPU. The CUDA built-in dim3 variable gridDim tells you. Given an M x K matrix A and a K x N matrix B, multiply A with B and store the result into a M x N matrix C. matrixmul Contents 1 The matrixMul ProblemĤ Increasing "Computatin-to-Memory Ratio" by Tilingħ.2 Step 2: use 16 iterations to update C0,0.ħ.3 Step 3: each thread stores one column of C0,0 from its register to global memory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed